The Prompt Engineer as a Language Model Psychologist

A Prompt Engineer is a specialist who designs, tests, and refines the instructions (prompts) given to Large Language Models (LLMs) to control their behavior and elicit high-quality, accurate, and safe outputs. They are a unique blend of a creative writer, a rigorous scientist, and a technical interpreter. They dive deep into the logic of language models to understand how different inputs influence the AI's reasoning, persona, and final response.

This role is essential for transforming general-purpose LLMs into specialized, reliable tools that can perform specific business tasks. A Prompt Engineer works to maximize model performance, reduce errors and hallucinations, and ensure the AI's outputs align perfectly with brand voice and safety guidelines. They are the critical bridge between abstract human intent and concrete machine interpretation, making generative AI practical and valuable for the enterprise.

Essential Skills for a Prompt Engineer

The foremost skill for a Prompt Engineer is an exceptional command of language, particularly English, with a deep understanding of nuance, tone, ambiguity, and structure. They must possess strong logical reasoning and analytical abilities to systematically diagnose why a model is producing a certain output and formulate a hypothesis for how to improve it. An iterative, scientific mindset for experimentation—hypothesize, test, analyze, repeat—is fundamental to the role.

While not always a heavy coder, a Prompt Engineer is often required to have proficiency in Python for scripting automated tests and interacting with model APIs. They must have deep familiarity with the specific behaviors and capabilities of major LLMs from providers like OpenAI, Anthropic, and Google. Understanding key model parameters like 'temperature' and 'top_p' and concepts like context windows is crucial for effective model control.

The Prompt Engineer's Core Technology Stack

The primary "technology" for a Prompt Engineer is the natural language interface of the LLMs themselves, typically accessed through development playgrounds like the OpenAI Playground or a company's internal tools. To manage their work at scale, they use version control systems like Git to track changes to prompts, treating them with the same rigor as source code.

For systematic evaluation, the stack includes Python with data analysis libraries like Pandas for creating test datasets and analyzing results. They increasingly rely on AI observability platforms like LangSmith or custom internal frameworks to trace the performance of prompts within larger applications. The ability to work within a modern development environment, including IDEs like VS Code, is also standard.

Mastering Advanced Prompting Techniques

An expert Prompt Engineer moves far beyond simple one-line instructions. They must master a portfolio of advanced techniques to elicit complex behavior from the model. This includes Few-Shot prompting, where they provide examples of the desired behavior within the prompt itself, and Chain-of-Thought (CoT) prompting, where they instruct the model to "think step-by-step" before giving a final answer, improving its reasoning capabilities.

Their expertise also covers creating highly structured prompts, often using formats like Markdown or XML tags to clearly delineate instructions, context, examples, and desired output formats. They are also skilled in "red teaming," an adversarial practice where they intentionally try to break the model's safety guardrails or provoke unwanted behavior to identify and patch vulnerabilities before they are exposed to users.

Systematic Testing and Evaluation

Effective prompting is a science, not just a creative art. A professional Prompt Engineer develops and executes systematic evaluation frameworks to measure the quality of their prompts objectively. This involves creating "golden datasets"—curated sets of test cases with ideal or expected outputs—to quantitatively score how well a given prompt performs across a wide range of scenarios, removing guesswork and anecdotal evidence from the optimization process.

They often build automated test harnesses, typically using Python scripts, to run prompts against these datasets at scale. The results are then evaluated based on predefined metrics, such as accuracy, adherence to formatting rules, or stylistic alignment with a brand voice. This rigorous, data-driven approach is what separates professional prompt engineering from casual experimentation and is essential for building reliable AI products.

Building and Maintaining Prompt Libraries

In a professional setting, prompts are critical assets that must be managed like software code. A key responsibility of a Prompt Engineer is to build, document, and maintain a centralized and version-controlled library of prompts. This practice ensures that all teams across a company are using the most effective, secure, and up-to-date prompts for various tasks, preventing fragmentation and redundant work.

This "prompt-as-code" methodology brings engineering discipline to the craft. It allows for A/B testing different prompt versions, safely rolling back changes if a new prompt causes performance to degrade, and creating a shared repository of institutional knowledge. This organized approach is crucial for scaling AI development and maintaining quality and consistency across all AI-powered features.

Collaboration with Developers and Stakeholders

A Prompt Engineer acts as a crucial link between technical and non-technical teams. They work hand-in-hand with software and AI engineers who integrate their prompts into larger applications, providing clear documentation, usage guidelines, and context on why a prompt is designed a certain way. They serve as the translator between high-level linguistic goals and the technical reality of the model.

They also collaborate closely with product managers, UX writers, and legal teams to fully understand the desired behavior of the AI. They must be able to take abstract business requirements, such as "the AI assistant must be more empathetic but not overly familiar," and convert them into concrete, testable instructions within a prompt. This cross-functional communication skill is vital to their success.

Persona and Tone Crafting

One of the most visible impacts of a Prompt Engineer is their role in shaping the AI's personality. They are responsible for writing the core "system prompts" or "meta-prompts" that define the AI's character, its tone of voice, its communication style, and its ethical boundaries. This ensures that every AI-generated response is consistent with a company's brand identity, building user trust and creating a cohesive experience.

This process is a sophisticated blend of creative writing, branding, and technical precision. The engineer will meticulously craft and test instructions to produce an AI that might be witty and helpful for a consumer brand or formal and hyper-accurate for a financial analysis tool. They are, in effect, the architects of the AI's personality, which is a critical component of the user experience.

Output Formatting and Parsing

A significant challenge in building AI applications is ensuring the LLM's output can be reliably understood and used by other software. An expert Prompt Engineer is skilled at compelling the model to return its response in a strictly structured format, such as valid JSON with a predefined schema or a specific XML structure. This is a non-negotiable requirement for integrating LLMs into automated, production-grade workflows.

To achieve this, they employ various techniques, including providing explicit formatting instructions, showing examples of the desired output in the prompt, or leveraging model-specific features like "function calling" or "tool use" that are designed for structured data extraction. This skill turns the probabilistic, free-form text of an LLM into predictable data that can power other applications, making automation possible.

Reducing Hallucinations and Improving Accuracy

Large Language Models are prone to "hallucinating"—confidently stating incorrect information. A primary function of a Prompt Engineer is to design strategies that mitigate this risk and ground the model's responses in factual data. They craft prompts that strictly limit the model's creative freedom and guide its reasoning process to be more analytical and evidence-based.

This is a cornerstone of building trustworthy AI, especially in Retrieval-Augmented Generation (RAG) systems. The engineer will meticulously design the prompt to instruct the model to base its answer *exclusively* on the source documents provided in the context. They often include an explicit instruction to state "I do not have enough information to answer" if the answer cannot be found in the provided text, a crucial technique for building accurate and reliable AI.

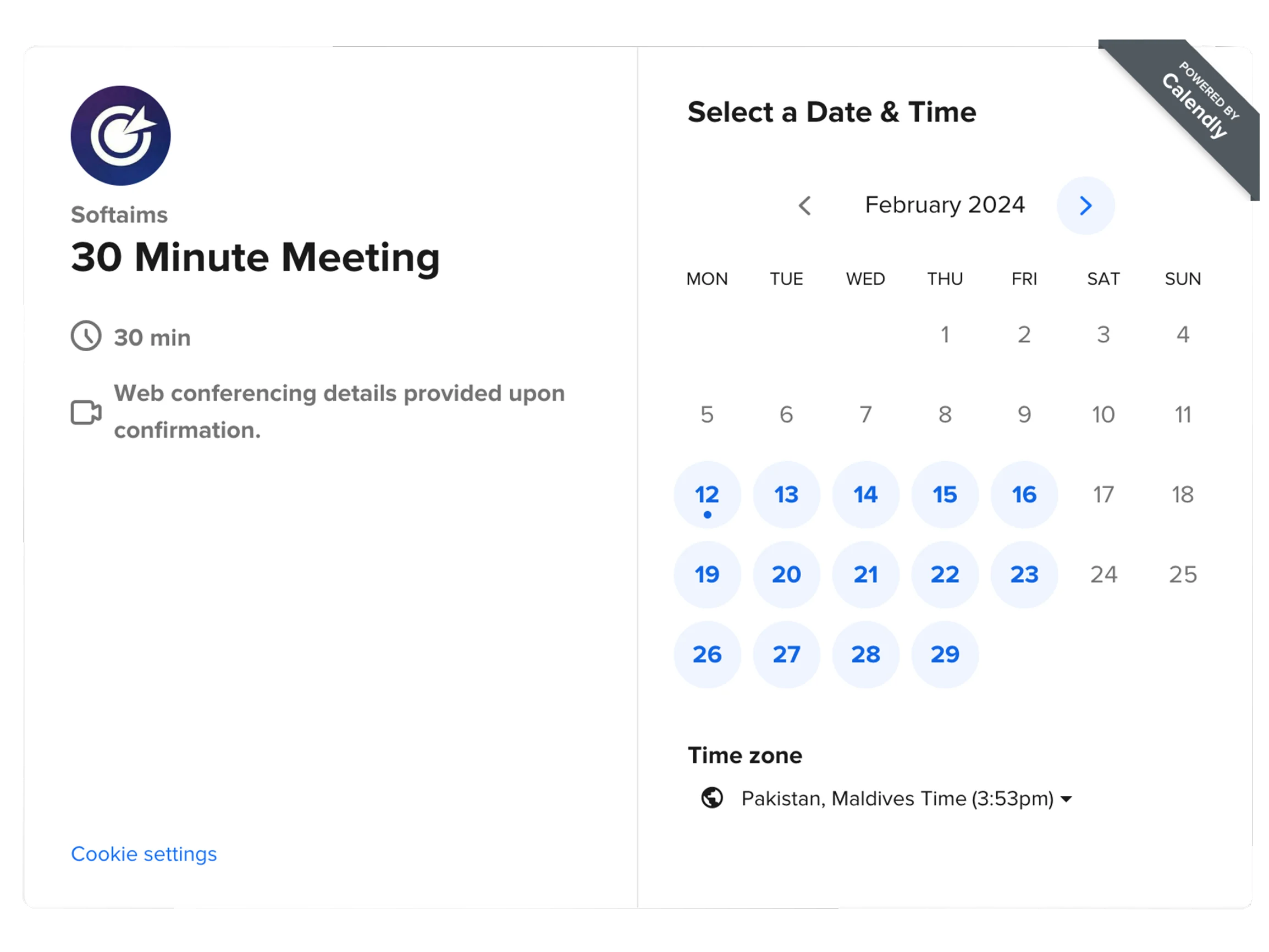

How Much Does It Cost to Hire a Prompt Engineer

The Prompt Engineer is a novel yet highly strategic role that has emerged as critical for companies serious about deploying generative AI. The demand for professionals who can effectively steer and de-risk powerful language models has exploded, and their compensation reflects this scarcity and high-impact potential. Salaries are very competitive, often on par with those of specialized data scientists or AI engineers.

Hiring a Prompt Engineer is an investment in quality control, safety, and performance for a company's AI initiatives. Their ability to maximize the value and minimize the risk of LLMs makes them a vital hire. Below is an estimated salary overview for an experienced, full-time Prompt Engineer in various global tech markets.

| Country |

Average Annual Salary (USD) |

| United States |

$120,000 - $190,000+ |

| United Kingdom |

$90,000 - $150,000+ |

| Canada |

$110,000 - $170,000+ |

| Australia |

$115,000 - $180,000+ |

| Germany |

$100,000 - $160,000+ |

| Switzerland |

$140,000 - $220,000+ |

| India |

$40,000 - $85,000+ |

| Singapore |

$110,000 - $180,000+ |

| Israel |

$130,000 - $200,000+ |

| Netherlands |

$95,000 - $155,000+ |

When to Hire Dedicated Prompt Engineers Versus Freelance Prompt Engineers

Hiring a dedicated, full-time Prompt Engineer is essential for companies where the quality, safety, and personality of the AI are core to the product experience. If you are building a customer-facing AI assistant, an advanced content generation tool, or any application where brand voice and reliability are paramount, you need a dedicated expert. They will own the prompt library, conduct continuous A/B testing, and serve as the long-term guardian of the AI's behavior.

A freelance Prompt Engineer is a powerful resource for specific, well-defined projects. This is ideal for optimizing a set of prompts for a new AI feature, conducting a one-time "red team" security audit on an existing application, developing the initial persona for a proof-of-concept, or training a development team on advanced prompting techniques. Freelancers provide elite, specialized skills to solve a particular problem with speed and focus.

Why Do Companies Hire Prompt Engineers

Companies hire Prompt Engineers to solve the critical "last-mile" problem in generative AI: translating a powerful, general-purpose model into a specialized and reliable business tool. LLMs are not plug-and-play solutions; without expert guidance, they can be unpredictable, inaccurate, and unsafe. A Prompt Engineer provides the essential layer of control and refinement needed for production-grade applications.

They are hired to directly improve product quality and reduce business risk. By systematically optimizing prompts, they increase the accuracy and helpfulness of AI features, which improves user satisfaction. By implementing safety guardrails and reducing hallucinations, they protect the brand from reputational damage. Ultimately, they are hired to make a company's investment in AI technology predictable, safe, and profitable.

In conclusion, the Prompt Engineer is a pivotal new role in the age of generative AI, acting as the indispensable translator between human goals and machine intelligence. They combine the nuance of a linguist with the rigor of a scientist to guide and shape the behavior of the world's most powerful language models. As AI becomes more deeply integrated into our products and services, these architects of AI conversation will be among the most critical and valuable talents in the technology industry.