The Power of the Generative AI Engineer as a Full-Stack Intelligence Builder

A Generative AI Engineer is a comprehensive professional who designs, develops, and deploys intelligent software systems capable of creating new content—such as text, images, code, or video—across the entire application stack. Unlike a Data Scientist who focuses primarily on model research, or a Machine Learning Engineer who focuses on model production, the Generative AI Engineer specializes in integrating cutting-edge Foundation Models and Large Language Models (LLMs) into production-ready applications.

This role is the cornerstone of modern GenAI product development, responsible for selecting the correct model architecture (e.g., GANs, Transformers), managing prompt and knowledge ingestion pipelines, building robust API layers for model access, and continuously monitoring the system's creative performance and impact on business metrics. The Generative AI Engineer is essential for transforming theoretical algorithms into scalable, profitable, and reliable enterprise content solutions.

Essential Skills for a Generative AI Engineer

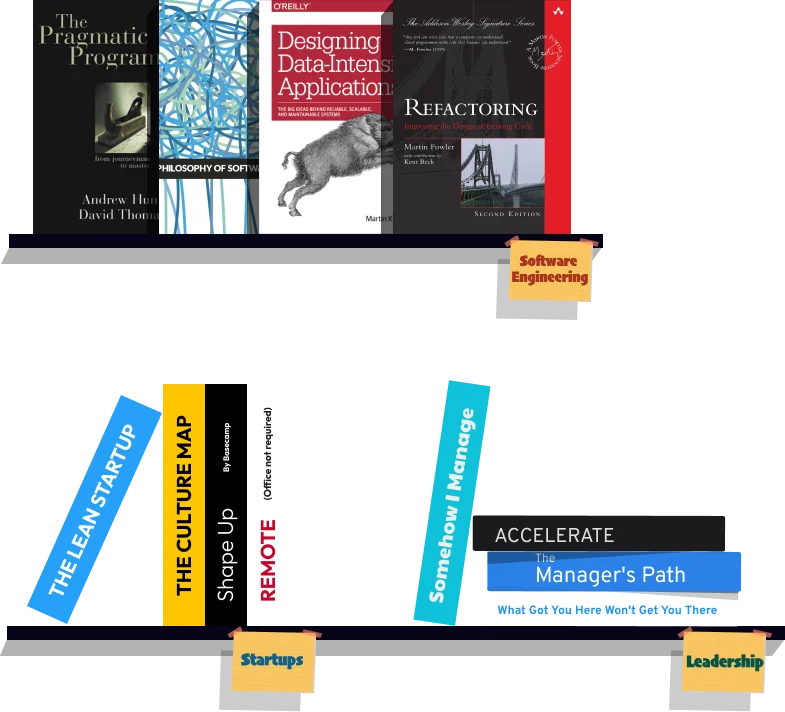

A proficient Generative AI Engineer must possess a strong foundation in Software Engineering and Deep Learning principles. Core skills include mastery of Python (with libraries like Hugging Face Transformers) and often a second language like JavaScript or Node.js (for frontend/API services), alongside deep knowledge of GenAI architectures.

Crucial specialized skills include expertise in MLOps (specifically LLMOps), involving tools for experimentation tracking, model versioning, and deployment. The engineer must be adept at cloud computing platforms (AWS, Azure, GCP) and possess strong data engineering abilities to build and maintain the high-quality, high-volume data streams necessary for fine-tuning and serving Generative AI models.

Generative AI Engineer's Core Technology Stack

The Generative AI Engineer operates on a stack centered around specialized model tuning and retrieval systems. The Modeling Layer uses frameworks like PyTorch or TensorFlow for foundation model training and tuning. The Data Layer relies on tools like Apache Spark for massive dataset processing, and Vector Databases (Pinecone, MongoDB Atlas) for Retrieval-Augmented Generation (RAG) tasks.

The Deployment Layer involves containerization with Docker and orchestration with Kubernetes to manage scalable, fault-tolerant model serving. LLMOps platforms (MLFlow, Kubeflow) are indispensable for managing the entire GenAI lifecycle, from prompt engineering experiments to production monitoring.

Mastering LLMOps and Model Deployment

The most valuable skill is the practical mastery of LLMOps. This involves building a sustainable Generative AI system by automating model training/fine-tuning via CI/CD pipelines, managing model and prompt repositories, and designing A/B testing infrastructure to safely evaluate new model versions or prompt engineering strategies against production traffic.

Engineers must also master low-latency inference serving, optimizing models for low latency and high throughput. Techniques like model quantization, ONNX export, and serverless deployment are critical skills to ensure the GenAI application remains responsive and cost-efficient under heavy load.

Mastering Data and Knowledge Ingestion Pipelines

A high-level Generative AI Engineer must be proficient in architecting and maintaining robust knowledge ingestion pipelines. This critical stage involves:

- Vectorization and Embedding Management: Creating dense vector representations of proprietary data to enable efficient similarity search for RAG.

- Data Governance: Implementing standards for data quality, security, and access control for sensitive training and grounding data.

- ETL/ELT Workflows: Designing efficient Extract, Transform, and Load (ETL) processes using tools like Apache Airflow or Prefect to prepare and feed data for model training and serving.

Mastery of these pipelines ensures the GenAI system receives high-quality, non-stale features, preventing the catastrophic degradation of model performance in production (known as model drift).

Designing and Integrating Intelligent Systems

The Generative AI Engineer must be skilled in designing the architecture for end-to-end intelligent applications. This involves:

[Image of a Retrieval-Augmented Generation (RAG) system architecture]

- System Architecture: Deciding whether to use microservices, serverless functions, or monolithic architectures for GenAI deployment.

- API Gateway Design: Building secure and scalable REST or gRPC APIs to allow downstream applications to query the LLM.

- AI Orchestration: Using frameworks (e.g., LangChain, Semantic Kernel) to combine multiple models, tools, and data sources into complex, multi-step agents.

Developers must ensure the GenAI component integrates seamlessly with traditional software elements, providing reliable and predictable outcomes despite the probabilistic nature of the underlying models.

Deployment and Observability

The Generative AI Engineer is the primary owner of the production environment for GenAI models. Deployment involves using cloud services and infrastructure-as-code (Terraform) to provision the necessary compute resources.

Observability and monitoring are paramount. The engineer must set up monitoring dashboards to track data drift (change in input data distribution), model drift (change in model performance over time), and key business metrics. They must implement automated alerting systems to detect and flag performance issues immediately for intervention and retraining or prompt revision.

Backend and API Integration Skills

The Generative AI Engineer must possess deep backend development expertise to manage the interaction between the LLM and the rest of the organization's technology stack. This involves wrapping the model logic into a high-performance serving layer and handling complex logic for managing session state, user authentication, and authorization for sensitive data access.

They are responsible for ensuring the entire system is fault-tolerant and highly available, handling potential model failures gracefully and providing fallback mechanisms to maintain a smooth user experience.

Security and Ethical AI Auditing

Security in GenAI systems requires managing model access control, ensuring the integrity of training and grounding data, and protecting model weights from theft. The engineer must implement rigorous checks to prevent data leakage and ensure the AI API is shielded from common web vulnerabilities.

The ethical responsibility involves running fairness and bias tests throughout the model lifecycle, documenting model decisions (interpretability), and implementing LLM guardrails against malicious input (e.g., prompt injection) to ensure the deployed AI adheres to corporate and legal standards.

Testing and Debugging the End-to-End System

Testing a GenAI system is complex, requiring multiple layers: unit tests for code, data validation tests, offline model evaluation (using metrics like AUC, F1-score, or BLEU/ROUGE for text generation), and crucial online A/B tests in the production environment. The engineer must design test harnesses that simulate real-world data and usage patterns.

Debugging involves tracing failures through the entire pipeline—from the knowledge base to the model serving API—to diagnose whether an error is caused by flawed data, a deployment issue, or a core model/prompt bug. This holistic debugging capability is a hallmark of a skilled Generative AI Engineer.

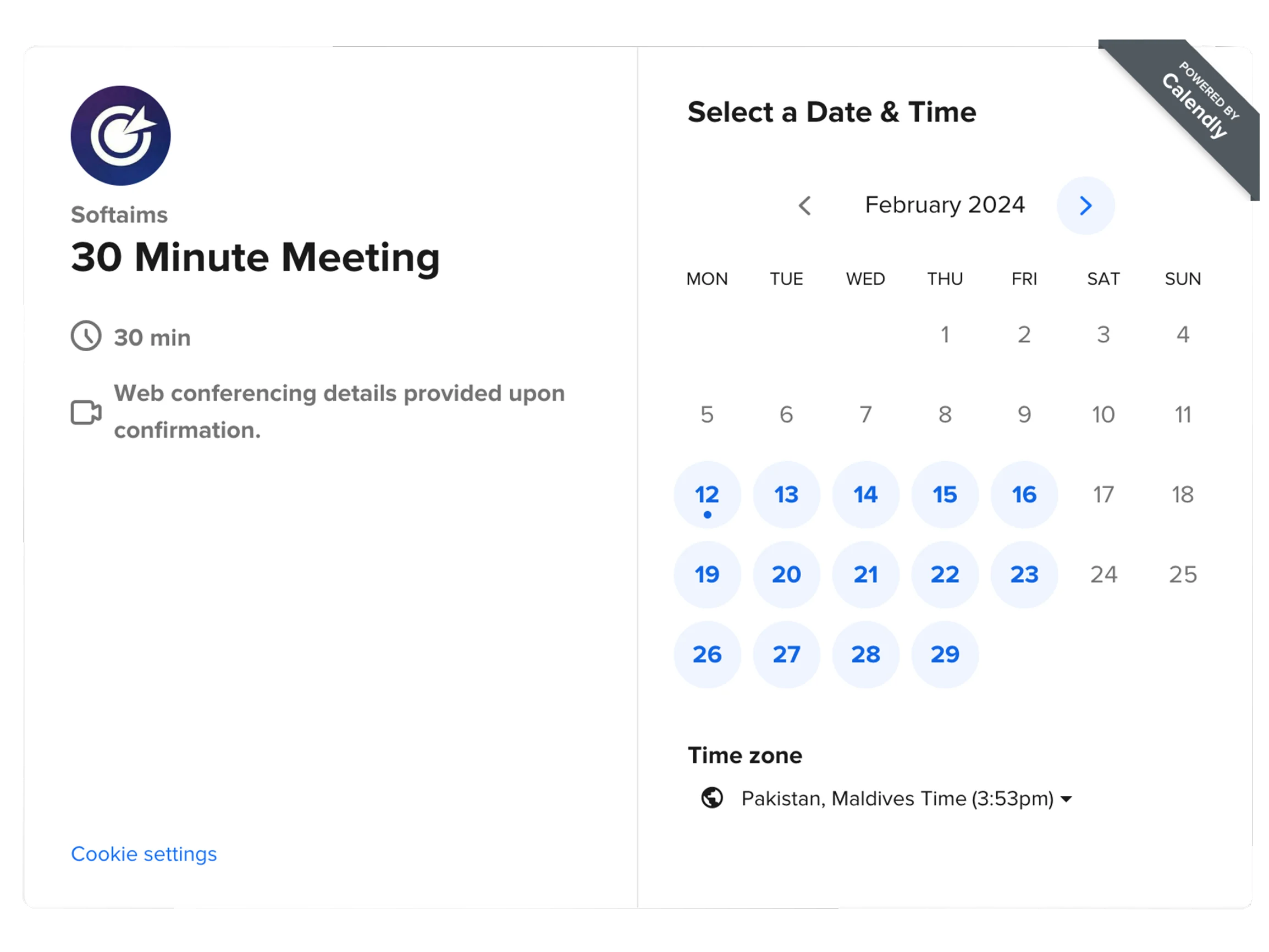

How Much Does It Cost to Hire a Generative AI Engineer

The Generative AI Engineer role commands one of the highest salaries in the tech industry, reflecting the combination of advanced machine learning expertise, software engineering maturity, and LLMOps knowledge required. Salaries typically align with those of Senior Software Architects or Principal Machine Learning Engineers.

| Country |

Average Annual Base Salary (USD) |

Senior-Level Salary Range (USD) |

| United States (Silicon Valley) |

$180,000+ |

$220,000 - $350,000+ |

| United States (NYC/Seattle) |

$160,000 - $250,000 |

$200,000 - $300,000+ |

| United Kingdom (London) |

$115,000 - $150,000 |

$140,000 - $210,000+ |

| Germany (Berlin) |

$90,000 - $130,000 |

$110,000 - $165,000+ |

| India (Bangalore) |

$40,000 - $70,000 (INR 30L - 55L) |

$60,000 - $100,000+ (INR 50L - 80L+) |

| Singapore |

$95,000 - $160,000 |

$160,000 - $240,000+ |

When to Hire Dedicated Generative AI Engineers Versus Freelance Generative AI Engineers

For building and maintaining the foundational GenAI infrastructure—the LLMOps platform, vector store architecture, and core production models/agents—hiring a dedicated Generative AI Engineer is mandatory. This role requires deep commitment to continuous system maintenance, optimization, and integration with the company's long-term data strategy.

A freelance Generative AI Engineer is highly effective for specific, complex, and time-bound projects such as migrating a model from one cloud provider to another, setting up the initial RAG pipeline (PoC), or performing a specialized prompt engineering and model tuning project on an existing deployed LLM. Their high-level expertise can accelerate critical infrastructure improvements.

Why Do Companies Hire Generative AI Engineers

Companies hire Generative AI Engineers to create measurable business impact by moving cutting-edge LLMs and Foundation Models from the lab to the production environment, at scale. They are the professionals who ensure that models—whether they automate content creation, power advanced chatbots, or drive generative design—are robust, reliable, and continuously provide value to the end-user.

By investing in the Generative AI Engineer, companies secure the capability to build and scale proprietary intelligent applications, future-proofing their core business processes and establishing a strategic advantage over competitors who rely solely on off-the-shelf, generalized AI services.

In conclusion, the Generative AI Engineer is the key architect of intelligent applications, possessing the rare combination of deep learning theory and production-grade software engineering skills necessary to deliver scalable, reliable, and accountable GenAI systems. You can watch a quick breakdown of their salary expectations in the US and India here: [Generative AI Engineer Salary in 2025].